Contents

All blogs / How to build an AI Bomberman bot: introduction to A* pathfinding (Part 3/3)

How to build an AI Bomberman bot: introduction to A* pathfinding (Part 3/3)

July 26, 2021 • Joy Zhang • Tutorial • 6 minutes

Tutorial Parts

How to build an AI Bomberman bot (Part 1)

How to build an AI Bomberman bot (Part 2)

How to build an AI Bomberman bot (Part 3)

An introduction to A* pathfinding

The A* pathfinding (or A* search) algorithm is a popular technique for finding the shortest path between two points in an environment.

In this tutorial, we'll implement an A* pathfinding algorithm in Python to help our agent navigate to a target destination in the Dungeons and Data Structures environment. Make sure you check out Part 1 to get set up (and optionally Part 2 to create a simple bot).

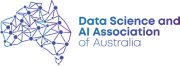

We'll use the A* pathfinding algorithm to help our knight find the shortest path to a destination.

We won't go deeply into the theory behind A* pathfinding here. Instead, check out these useful resources:

- Get a feel for the intuition behind A* pathfinding: Introduction to A* Pathfinding [1]

- The algorithm pseudocode we'll be implementing: Amit's thoughts on pathfinding [2]

The A* pathfinding algorithm

Provided a starting node, A* pathfinding works by:

- Searching its neighboring nodes

- Assigning them a total cost (i.e. time taken, tiles moved, etc) based on the cost taken to get there from the starting node plus the estimated cost of the cheapest path from the neighbor node to the destination

The goal of A* is to minimize:

f(n) = g(n) + h(n)

Where:

- n is the current node in the path

- g(n) is the cost of the path from the starting node to n

- h(n): a heuristic function that estimates the cost to move from n to the target cell

Example of f(n), g(n) and h(n) values in our environment.

What's the difference between A* and Dijkstra's algorithm?

The A* search algorithm can be considered an application of Dijkstra's algorithm, which adds heuristics to guide its search and achieve better performance. If you set the heuristic function *h(n)_ equal to 0, the A_ algorithm essentially boils down to Dijkstra's algorithm.

Getting started

The goal will be to help our agent successfully navigate to a given destination in the environment Dungeons and Data Structures:

If you've been following along with Part 1 and Part 2, we'll be restructuring our project to make it a bit more manageable.

To start, create 3 files in your working directory:

__init__.py— to be called by the main environment

from . import my_agent

def agent():

return my_agent.Agent()my_agent.py— holds our agent's behavior logic

from .helpers import *

class Agent:

def __init__(self):

'''

Place any initialization code for your agent here (if any)

'''

self.pending_actions = []

def next_move(self, game_state, player_state):

'''

This method is called each time your Agent is required to choose an action

'''

passhelpers.py— holds all our helper functions and classes (empty file for now)

Helper functions

We'll define some helper functions that will be useful for our A* pathfinding algorithm later. Add these to your helpers.py file.

Note: For consistency in the following sections, we'll refer to tiles as an (x,y) location of any tile on the map, and nodes as specific instances of the Node class object (which we'll cover later on). A node will contain a

locationproperty equivalent to a tile.

get_free_neighbors()

Given a tile, this will be used to get a list of surrounding tiles to search next.

# given our current location, return only surrounding tiles that are free

def get_free_neighbors(game_state, location):

x, y = location

neighbors = [{(x-1, y): 'l'}, {(x+1, y): 'r'}, {(x, y-1): 'd'}, {(x, y+1): 'u'}]

free_neighbors = []

for neighbor in neighbors:

for tile, direction in neighbor.items():

if game_state.is_in_bounds(tile):

if game_state.is_occupied(tile):

# check if this tile contains treasure or ammo

if game_state.entity_at(tile) == 't' or game_state.entity_at(tile) == 'a':

free_neighbors.append({tile:direction})

else:

free_neighbors.append({tile:direction})

return free_neighborsget_treasure()

We'll make our target destination the location of any treasure that spawns. (Alternatively, switch out treasure for ammo to make the agent pick up ammo around the map instead).

# finds treasure, if any

def get_treasure(game_state):

treasure = game_state.treasure

if treasure:

return treasure[0] # return first treasure on the listmanhattan_distance()

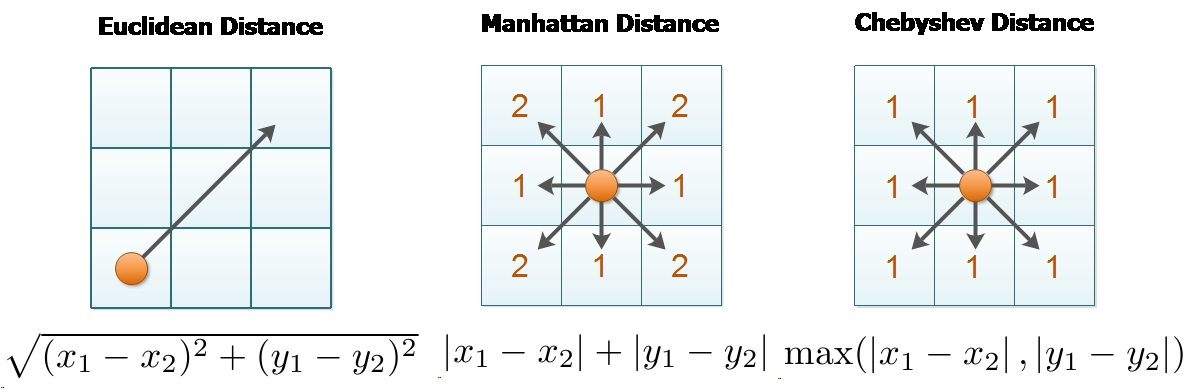

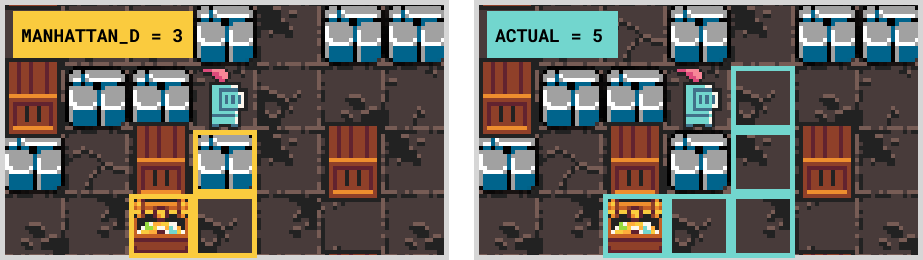

We mentioned earlier that the A* pathfinding algorithm is guided by a heuristic function $h(n)$. The heuristic function we'll use is the Manhattan Distance:

This Manhattan Distance is an approximation of the distance between our current node and the destination since it will not account for any obstacles. While not perfect, it will work for our purposes.

def manhattan_distance(start, end):

distance = abs(start[0] - end[0]) + abs(start[1] - end[1])

return distanceThe A* Algorithm

Here is the pseudocode we will be implementing from [2]:

OPEN = priority queue containing START

CLOSED = empty set

while lowest rank in OPEN is not the GOAL:

current = remove lowest rank item from OPEN

add current to CLOSED

for neighbors of current:

cost = g(current) + movementcost(current, neighbor)

if neighbor in OPEN and cost less than g(neighbor):

remove neighbor from OPEN, because new path is better

if neighbor in CLOSED and cost less than g(neighbor): ⁽²⁾

remove neighbor from CLOSED

if neighbor not in OPEN and neighbor not in CLOSED:

set g(neighbor) to cost

add neighbor to OPEN

set priority queue rank to g(neighbor) + h(neighbor)

set neighbor's parent to current

reconstruct reverse path from goal to start

by following parent pointersHere is our implementation (link to full code):

########################

### Pathfinder ###

########################

class Node:

def __init__(self, parent=None, location=None, action=None):

self.parent = parent

self.location = location

self.action = action

self.h = 0

self.g = 0

self.f = 0

def get_path(node):

path = []

while node.parent:

path.append(node)

node = node.parent

return path

def get_path_actions(path):

actions = []

for node in path:

actions.append(node.action)

return actions

def astar(game_state, start, target):

print("----A* STAR----")

path = []

# add starting node to open list

open_list = [Node(None, start, None)]

closed_list = []

# exit the loop early if no path can be found

# (the target is likely blocked off)

max_loops = 1000

counter = 0

# while lowest rank in OPEN is not the GOAL:

while len(open_list) > 0 and counter <= max_loops:

# find the node with the lowest rank

curr_node = open_list[0]

curr_index = 0

for index, node in enumerate(open_list):

if node.f < curr_node.f:

curr_node = node

curr_index = index

# check if this node is the goal

if curr_node.location == target:

print(f"~~~~~~~FOUND TARGET~~~~~~~")

path = get_path(curr_node)

return path

# current = remove lowest rank item from OPEN

# add current to CLOSED

del open_list[curr_index]

closed_list.append(curr_node)

# get neighbors of current

neighbors = get_free_neighbors(game_state, curr_node.location)

neighbor_nodes = []

for neighbor in neighbors:

for location, action in neighbor.items():

neighbor_nodes.append(Node(None, location, action))

# for neighbors of current:

for neighbor in neighbor_nodes:

# used for loop behavior

in_closed = False

in_open = False

# cost = g(current) + movementcost(current, neighbor)

cost = curr_node.g + 1

# if neighbor in OPEN and cost less than g(neighbor):

# remove neighbor from OPEN, because new path is better

for index, node in enumerate(open_list):

if neighbor.location == node.location and cost < neighbor.g:

del open_list[index]

in_open = True

# if neighbor in CLOSED and cost less than g(neighbor): ⁽²⁾

# remove neighbor from CLOSED

for index, node in enumerate(closed_list):

if neighbor.location == node.location and cost < neighbor.g:

del closed_list[index]

in_closed = True

# if neighbor not in OPEN and neighbor not in CLOSED:

# set g(neighbor) to cost

# add neighbor to OPEN

# set priority queue rank to g(neighbor) + h(neighbor)

# set neighbor's parent to current

if not in_open and not in_closed:

neighbor.g = cost

open_list.append(neighbor)

neighbor.h = manhattan_distance(neighbor.location, target)

neighbor.f = neighbor.g + neighbor.h

neighbor.parent = curr_node

counter += 1

print(f"---NO PATH FOUND---")Add this to helpers.py.

Note that we've added get_path_actions(). This will return the actions our agent will need to take in order to get to the destination (e.g. ['u', 'l', ...])

Adding pathfinding to our agent

To check that everything is working, update the next_move() method in your my_agent.py file:

def next_move(self, game_state, player_state):

print(f"tick: {game_state.tick_number}")

# treasure spawns randomly

treasure = get_treasure(game_state)

if len(self.pending_actions) > 0:

action = self.pending_actions.pop()

elif treasure:

path = astar(game_state, player_state.location, treasure)

if path:

actions = get_path_actions(path)

print(f"--ACTIONS: {actions}")

for action in actions:

self.pending_actions.append(action)

action = self.pending_actions.pop()

else:

action = ''

else:

action = ''

return actionIn your terminal, run:

coderone-dungeon my_agent --interactive(The --interactive flag will allow you to play as the Knight)

If you've set everything up successfully, you should see your agent picking up treasure around the map (treasure spawns randomly).

----A* STAR----

~~~~~~~FOUND TARGET~~~~~~~

--ACTIONS: ['d', 'd', 'd', 'r', 'r', 'd', 'r', 'r', 'r']Note: we built our path from the destination node backward so this list of actions is also reversed. This is fine since we use pop() which takes actions from the end of the list.

Conclusion

You should now have an agent that implements the A* pathfinding algorithm to navigate to a target destination. From here, you can continue improving your agent or try implementing A* pathfinding in your own game.

If you're looking for more environments to practice AI programming, I recommend checking out our list of AI programming competitions.